SEO Forecasting Methods with Examples

Introduction

Most SEO forecasts fail not because the math is wrong, but because the inputs are garbage. A team pulls three months of traffic data, draws a line in Excel, and hands a stakeholder a number that has roughly the same predictive power as a coin flip. Six months later, the forecast is off by 60%, trust is gone, and the SEO budget gets cut.

This guide covers practical SEO forecasting methods — the ones that actually hold up when a CFO asks questions. Whether you're building an SEO forecasting template for a client, running an organic traffic prediction model for a board presentation, SEO forecasting for budget planning, or trying to forecast SEO impact of site changes before a migration goes live, the frameworks here will give you something defensible.

No black boxes, no "just trust the model." Real approaches, with the trade-offs laid out honestly.

Forecasting Fundamentals

Before touching any tool or spreadsheet, get clear on what kind of forecast you're building and what it's for. Conflating forecast types is one of the fastest ways to lose credibility with a finance team.

Forecast Types

There are three core forecast types used in SEO forecasting, and they serve different purposes.

A descriptive forecast tells you where you're headed if nothing changes — useful for setting a baseline and spotting drift early. A predictive forecast incorporates live signals (rankings, crawl data, CTR shifts) to project likely outcomes under current conditions. A prescriptive forecast models what should happen if specific actions are taken — publishing 40 new pages, fixing crawl errors, building 200 links.

Most SEO forecasting models agencies deliver to clients are technically descriptive dressed up as prescriptive. That gap between what's promised and what's delivered is where most client-agency trust breaks down.

Baseline vs Uplift vs Scenarios

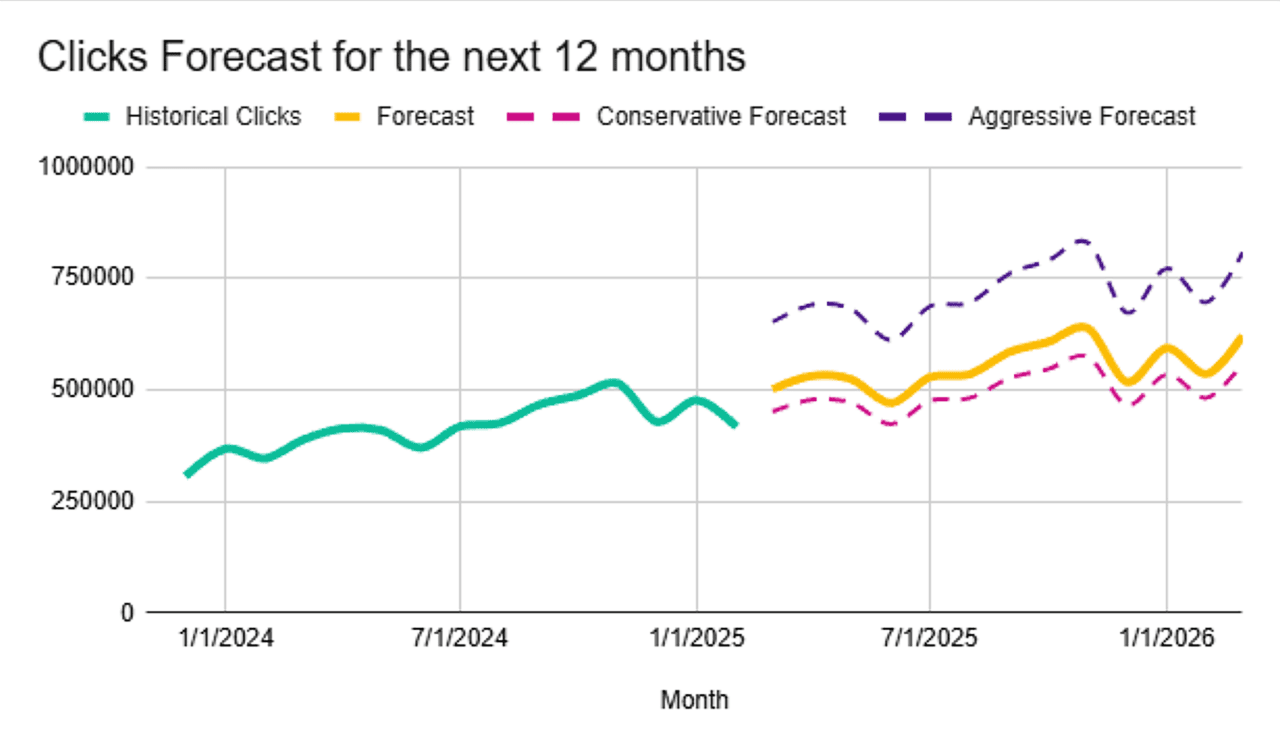

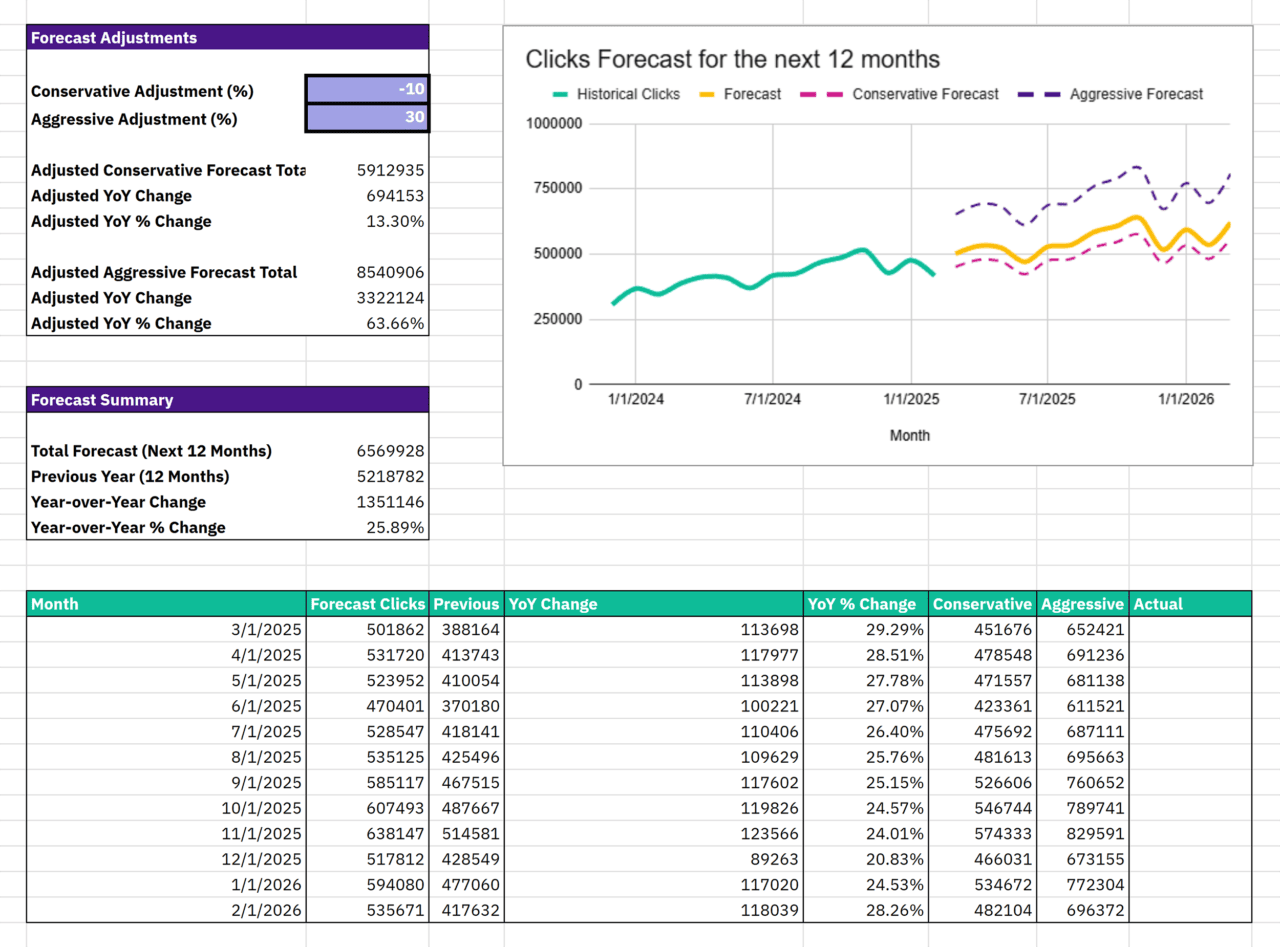

A baseline is your organic trajectory with zero intervention — what happens if the team publishes nothing, builds no links, fixes nothing. An uplift layers a specific planned action on top and estimates the incremental gain. A scenario forecast models three futures — conservative, base, aggressive — with explicit, named assumptions attached to each.

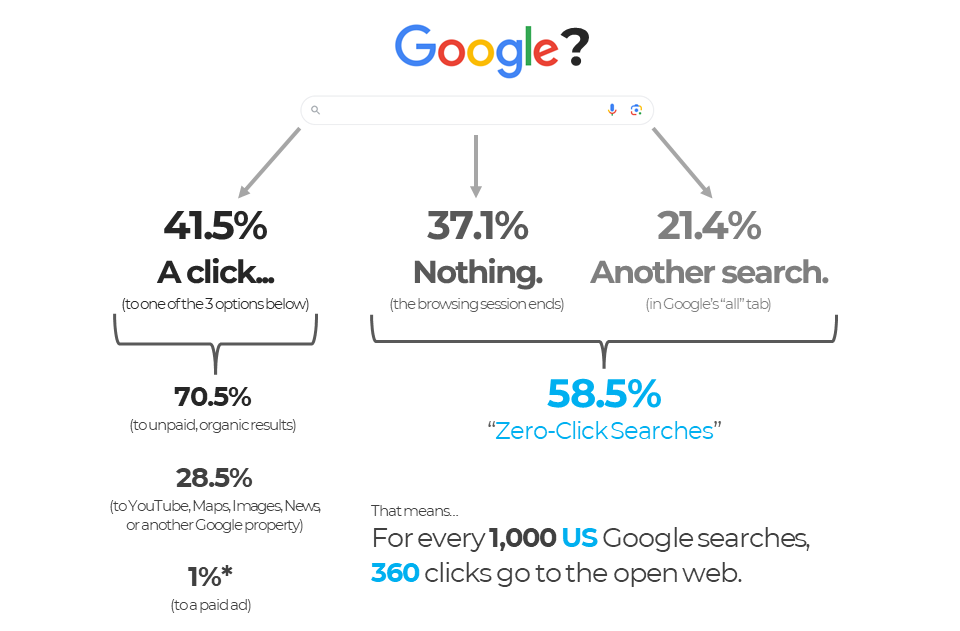

The most useful thing you can do for a stakeholder is show all three together. Rand Fishkin's zero-click search study found that out of every 1,000 Google searches in the US, only 360 clicks reach any property outside Google's own ecosystem. A baseline built on raw impression data — without accounting for zero-click SERPs and AI Overviews — will systematically overstate projected traffic. That's the kind of assumption that makes a forecast look great in January and embarrassing by Q3.

Choosing a Horizon

Short-term forecasts (1–3 months) can be fairly precise if your data is clean. Medium-term (3–6 months) is where most SEO traffic forecasting lives — long enough to be useful for planning, short enough to be defensible. Anything beyond 12 months is scenario planning, not forecasting, and should be framed that way.

A rule worth remembering: the longer your horizon, the wider your uncertainty bands need to be. A forecast that shows a single clean line 18 months out is lying. As Kevin Indig — who has led SEO at Shopify, G2, and Atlassian — noted: "Simply avoiding goals by saying 'SEO is a black box' doesn't resonate with leadership — they still expect a plan with accountable numbers." The answer isn't to avoid numbers. It's to show ranges with named assumptions and reforecast quarterly as conditions change.

Data Sources and Measurement Setup

The quality of your forecast ceiling is set by the quality of your data floor. Garbage in, garbage out — and in SEO, the garbage is often invisible until you're already presenting to a board.

GA4

GA4 is the default starting point for SEO forecasting with GA4, but it comes with real problems most guides politely skip. Channel groupings frequently misattribute direct traffic — a known issue where users who click an organic result, get interrupted, and return via a bookmark get counted as Direct. Session-based attribution is gone, replaced by event-based tracking that behaves differently enough to break year-over-year comparisons if you migrated mid-year.

Practical fix: create a custom channel group in GA4 that isolates (organic search) / (none) with source matching google|bing|yahoo|duckduckgo. Then build a separate exploration report for that segment filtered by landing page. This gives you page-level organic data that's actually usable for bottom-up forecasting.

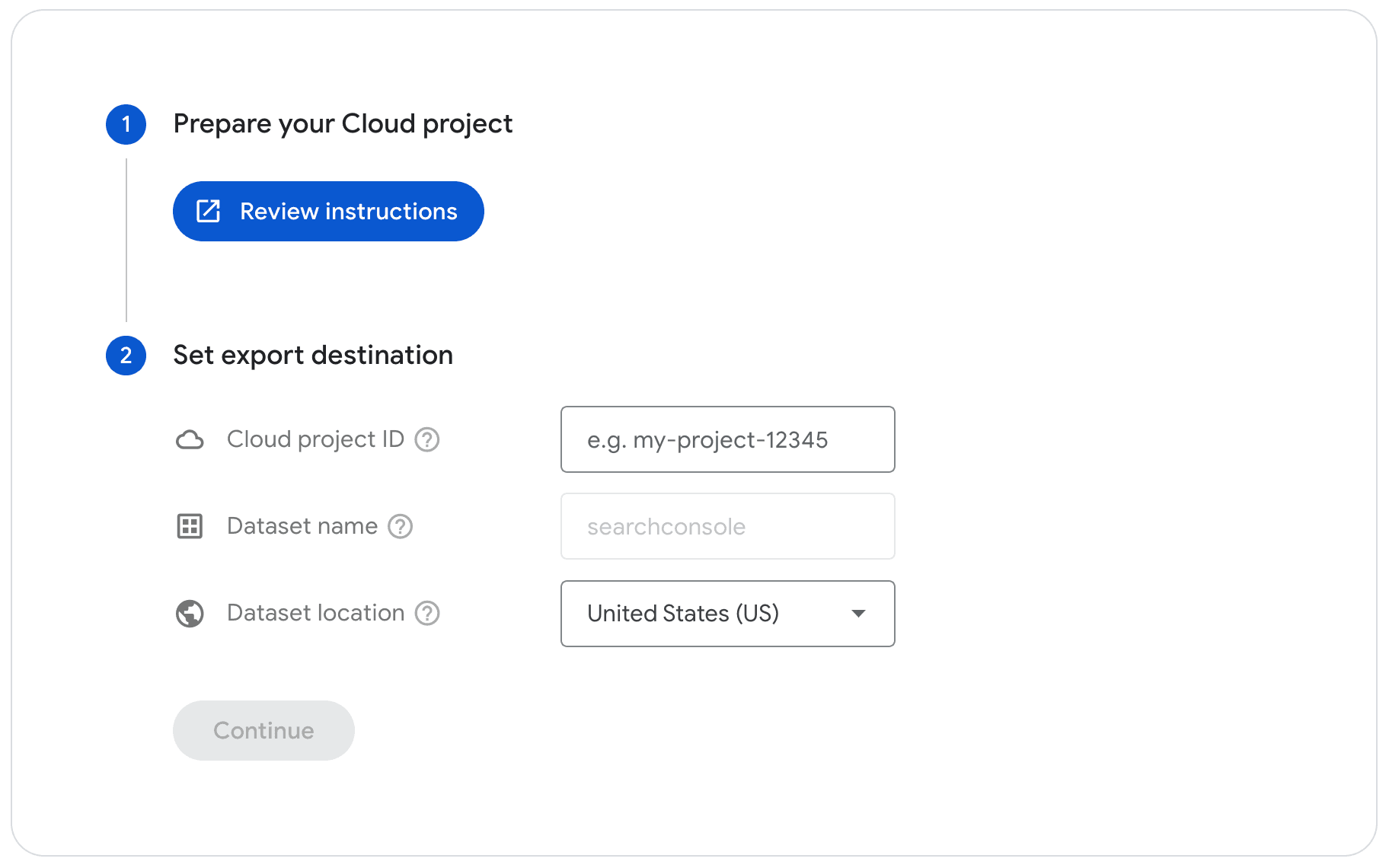

One more thing — GA4 data takes 24–48 hours to process and sampling kicks in on large date ranges. If you're pulling 12+ months for a trend model, export via BigQuery. Otherwise you're forecasting from a sample of a sample.

Google Search Console

GSC is the cleanest source for google search console forecasting because it captures impressions, clicks, and average position directly from Google's index — no sampling, no attribution model interference. The catch: GSC only retains 16 months of data, after which it's permanently deleted with no recovery option. If you need longer trend lines, you needed to be exporting yesterday.

The most useful GSC exports for SEO forecasting: queries by page (for bottom-up keyword mapping), impressions over time (for demand trend modeling), and position history on target keywords (for CTR curve calibration). The practical workflow many teams miss — export GSC data to BigQuery via the native bulk export that Google launched in March 2023. Set it up today and you'll have a rolling multi-year dataset within a year. BigQuery has no retention limits — unlike the GSC UI, which cuts off at 16 months hard.

Aleyda Solis, founder of Orainti and one of the most cited SEO consultants globally, recommends using AI-assisted analysis tools on top of GSC data for forecasting: "If you want to forecast, you can use the Advanced Data Analysis plugin and take the latest fluctuations, certain average positions, scenarios, etc., into consideration. It can give you the same support that a data analyst would provide."

Google Ads Signals That Improve SEO Forecasts

This connection is chronically underused. Google Ads conversion data — particularly when you have auto-tagging enabled and a healthy impression share — gives you two things organic data can't: actual demand volume and real conversion rates by query intent.

If a paid campaign on "project management software for agencies" converts at 3.2% and you're forecasting organic rankings for that same query cluster, using 3.2% as your conversion rate assumption is far more grounded than any industry benchmark. This is exactly where combine SEO and google ads forecasts delivers real value — you're reading demand from auction data, not guessing at it.

For teams running SEO vs PPC forecasting comparisons or running Google Ads through YeezyPay agency accounts, there's a practical advantage: agency-level accounts give access to impression share data across full account history, which makes demand modeling for organic forecasting significantly more precise. You're not estimating search volume from third-party tools — you're reading it directly from Google's own auction signals.

CRM and Offline Conversions

For SEO forecasting for lead generation and SEO forecasting for ecommerce, stopping at GA4 sessions is a mistake. The metric that matters to a business isn't organic clicks — it's revenue or pipeline, and that lives in Salesforce, HubSpot, or an order management system.

Connecting CRM data to GSC and GA4 creates a full-funnel picture: impressions → clicks → leads → qualified pipeline → closed revenue. Shopify senior SEO specialist Arthur Camberlein recommends combining first-party and third-party data explicitly: "First-party gives you insights into where you are currently appearing or ranking. Third-party data will enrich these with opportunities." The formula for SEO revenue forecasting in ecommerce: sessions × conversion rate × average order value — but only if conversion rate comes from your own CRM data filtered by organic traffic, not from a generic industry benchmark that has nothing to do with your site.

Segmentation That Makes Forecasts Credible

A forecast that treats all organic traffic as one blob is useless for planning. The moment you segment — by brand, by intent, by page type — the forecast becomes something a team can actually act on.

Brand vs Non-Brand Separation

Brand traffic and non-brand traffic behave completely differently and need to be modeled separately. Brand queries spike with PR, TV, and paid brand campaigns. Non-brand traffic responds to ranking changes and content investment. Mixing them together masks both trends.

In GSC, filter queries containing your brand name (and all misspellings) into a separate segment. In GA4, create a custom segment for sessions where the landing page came from a brand query via a UTM-tagged paid brand campaign — and exclude those from your organic non-brand baseline. SEMrush's enterprise SEO benchmarking data shows that brand queries typically convert at 2–5x the rate of non-brand — which means a brand traffic spike can make your organic conversion rate look great while non-brand performance quietly declines.

Intent Segmentation and Funnel Stages

Keyword ranking forecast accuracy improves dramatically when you group keywords by intent before modeling. Informational, navigational, commercial, and transactional queries have different CTR curves, different conversion rates, and different response times to ranking changes.

A practical segmentation framework: map each target keyword to a funnel stage (awareness / consideration / decision), assign a conversion rate from CRM data, and build separate forecast models per stage. The consistent differentiator in forecast accuracy is intent-based segmentation at the keyword level — not the sophistication of the forecasting model itself.

Page-Type Cohorts

Not all pages rank the same way or respond to the same inputs. A blog post targeting informational keywords behaves differently from a product category page targeting transactional queries. Grouping pages into cohorts — blog, product, category, landing page, tool — lets you apply different growth rate assumptions to each group.

This is especially important for SEO forecasting for ecommerce, where category pages and product pages have fundamentally different ranking dynamics, seasonal patterns, and conversion rates. Forecasting them together introduces noise that makes the whole model less reliable.

Handling Migrations, Rebrands, and Tracking Changes

This is where most forecasts quietly break. A site migration, a GA4 implementation change, or a domain rebrand creates a discontinuity in your time series. If you don't account for it, your trend model will either overfit the post-migration dip or smooth over a real ranking loss.

The practical approach: mark every tracking change, migration date, and rebrand event as an annotated break point in your data. Build separate pre- and post-event baselines. Fit your trend model to the post-event data only, using the pre-event data as a sanity check — not as a training set.

SEO Forecasting Methods

This is the section most guides spend three paragraphs on before recommending a single Excel formula. The reality is there are at least eleven distinct SEO forecasting methods worth knowing, and the right one depends on your data quality, your time horizon, and what question you're actually trying to answer. Most seasoned practitioners combine two or three into a hybrid model.

Top-Down Trend Forecasting

Start with total organic traffic, fit a trend line (linear, exponential, or seasonal decomposition depending on the pattern), and project forward. Simple, fast, and useful for high-level stakeholder communication.

The limitation: it assumes the future looks like the past. It misses ranking changes, algorithm updates, and any planned content investment. Use it as a sanity check against more granular methods, not as a standalone answer. A 2026 top-down forecast built on 2023 traffic data will also structurally underestimate the CTR impact of AI Overviews — a risk documented across multiple datasets showing 40–60% CTR declines on informational queries since mid-2024.

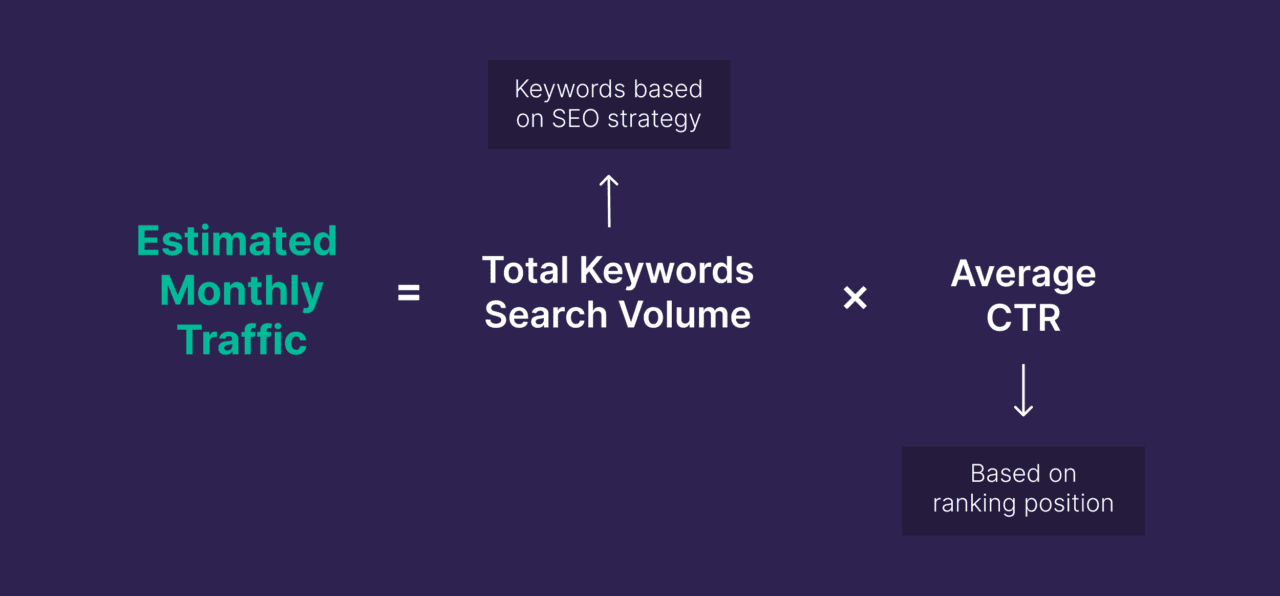

Bottom-Up Keyword-to-Page Mapping

This is the most common method for SEO traffic forecasting at the campaign level. For each target keyword: take the monthly search volume, apply a CTR estimate based on projected rank, multiply by your page's conversion rate, and sum across the keyword set.

The formula looks clean. The execution is where it falls apart. Search volume from tools like Ahrefs or Semrush routinely diverges from GSC impressions for long-tail queries. And ranking assumptions — "we'll hit position 3 in six months" — are often optimistic without supporting link velocity or topical authority data. Build in a confidence discount: if you estimate 1,000 monthly clicks at position 3, model 600–700 as your expected case and present the range, not the point estimate.

Page-Level Cohort Forecasting

Instead of modeling individual keywords, group pages into cohorts by type and age, then model cohort-level traffic growth based on historical performance of similar pages. A blog post published six months ago in your "how-to" cluster is a better predictor of what a new blog post will do than any keyword volume estimate.

This is particularly powerful for SEO forecasting for ecommerce, where product and category pages follow distinct traffic trajectories. Forecast them separately — category pages with different seasonal patterns, conversion rates, and ranking dynamics than product detail pages. Forecasting them together introduces noise that degrades the entire model.

CTR Curve Forecasting by Rank and SERP Features

Seo forecasting using ctr curves means applying position-specific click-through rates to your impression estimates. The trouble is that every published CTR benchmark from 2022 or 2023 is structurally wrong for 2025–2026 forecasting.

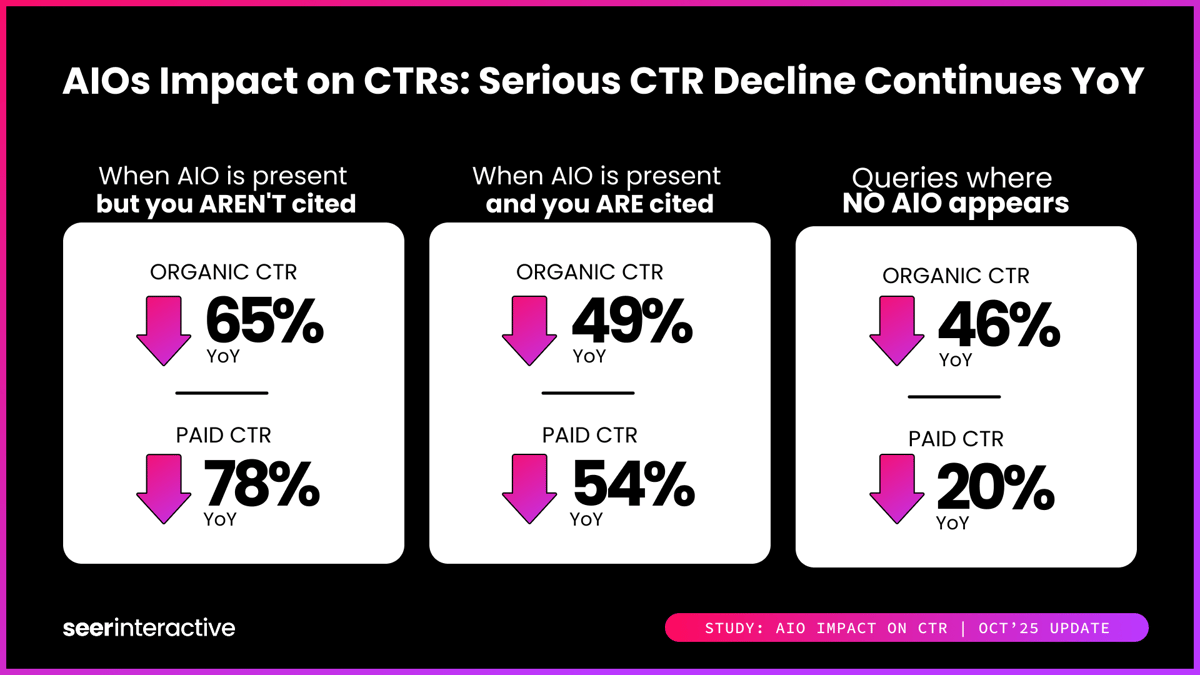

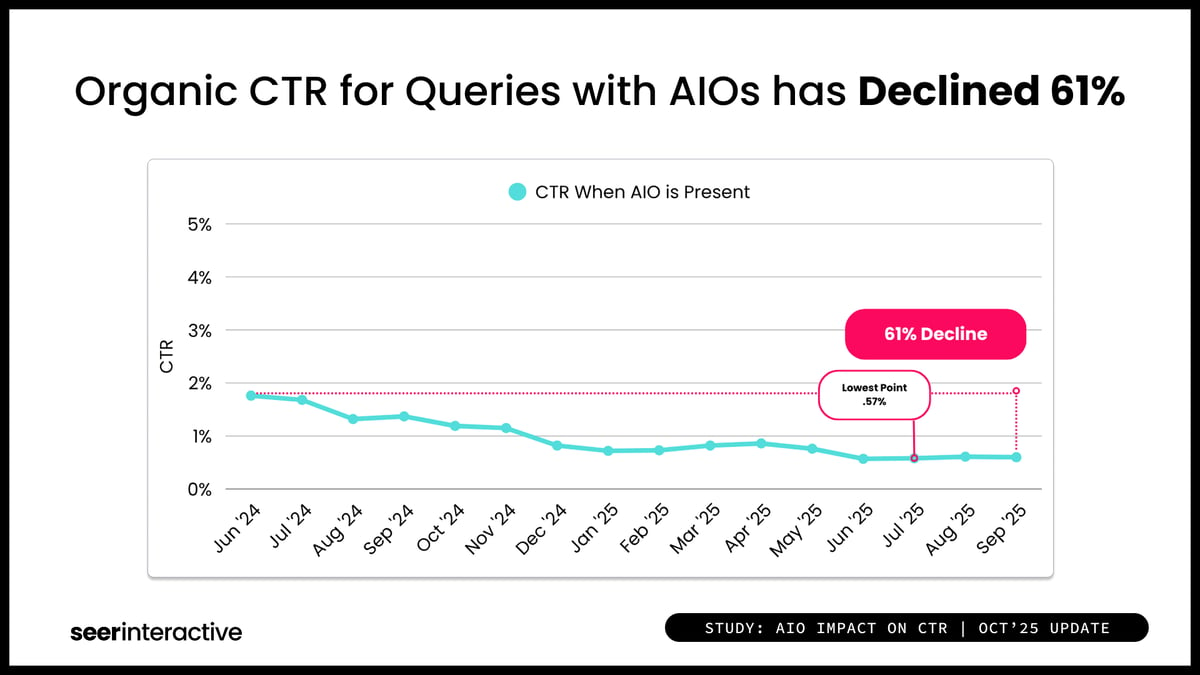

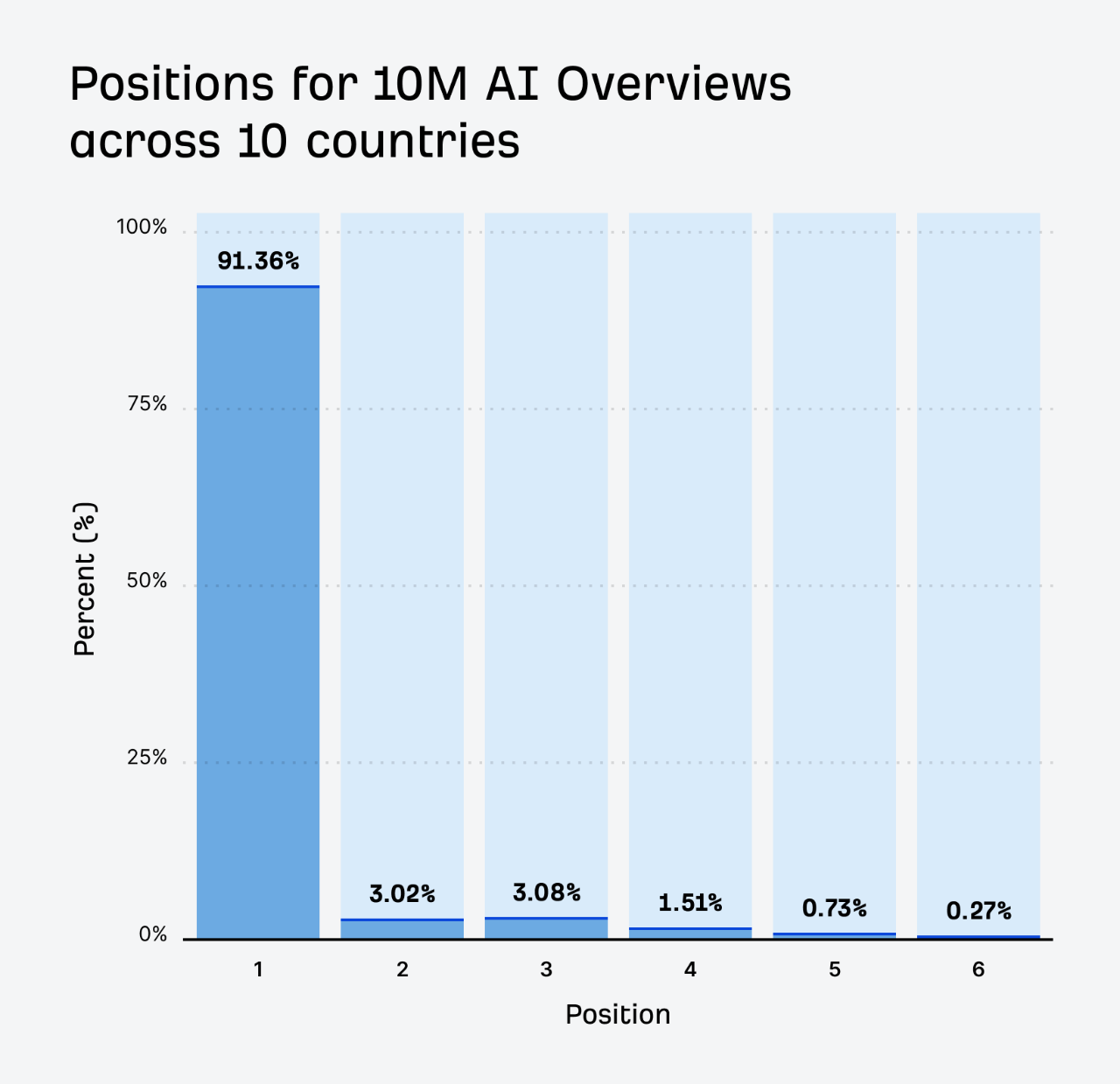

Study of 3,119 informational queries across 42 client organizations — tracking 25.1 million organic impressions from June 2024 to September 2025 — found that organic CTR for queries with AI Overviews dropped 61%, from 1.76% to 0.61%.

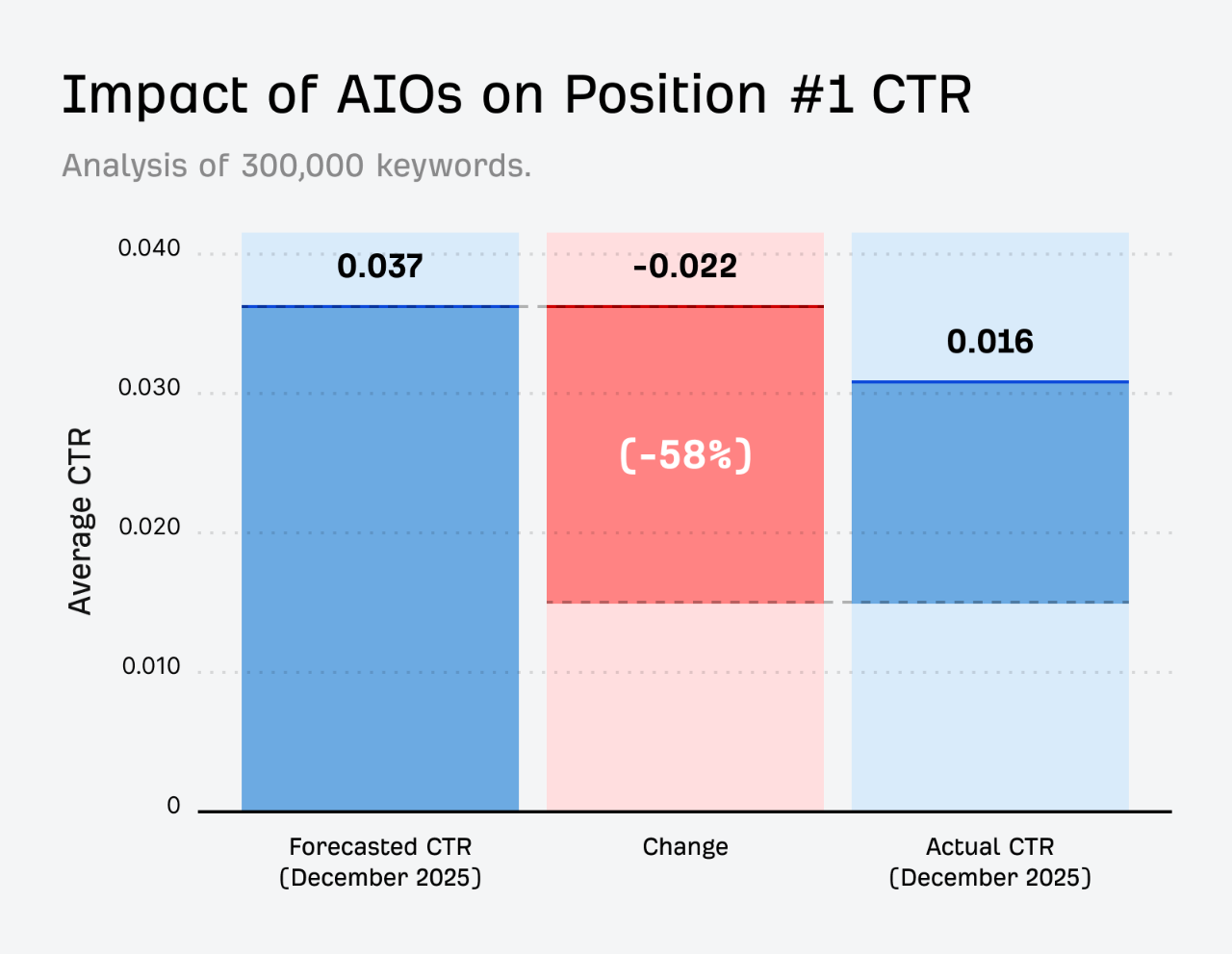

Even queries without AI Overviews fell 41%, settling at 1.62% by September 2025. Ahrefs independently confirmed a 58% CTR reduction for position-one content on AI Overview queries as of December 2025.

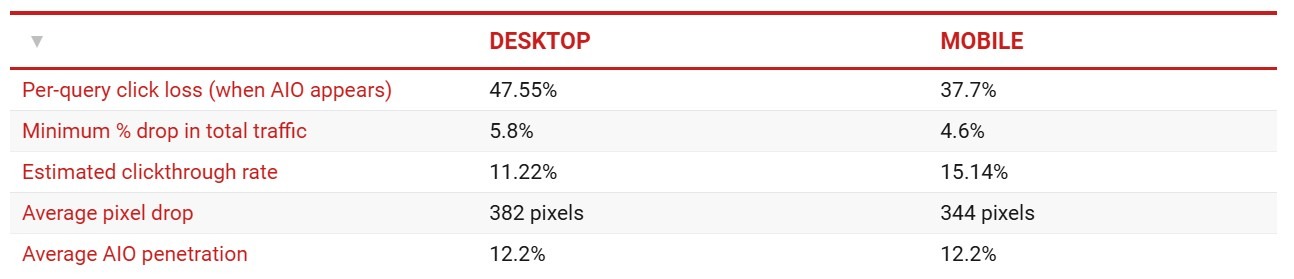

Authoritas found a 47.5% CTR drop on desktop when AI Overviews appear.

The practical fix: stop using generic position-CTR tables. Pull actual GSC CTR per page and query, segment by whether AI Overviews appear for that query cluster, and use those real numbers as your curve. If your target keywords trigger AI Overviews, build a separate downside scenario that reflects the 40–60% CTR haircut the data consistently shows. An organic traffic prediction built on pre-2024 CTR curves will overstate projected traffic by 40–60% on informational content before the quarter even starts.

Seasonality-Adjusted Forecasting

Seo forecasting with seasonality means decomposing your traffic time series into trend, seasonal, and residual components before projecting. Without this step, a forecast built in Q4 (high season for most retail) will systematically overestimate Q1 performance.

The standard tool is STL decomposition (Seasonal-Trend decomposition using LOESS), available in Python's statsmodels library. Google's open-source Prophet library handles seasonality well and requires minimal statistical expertise. For teams without a data scientist, a simpler approach works: calculate the ratio of each month's traffic to the annual average over two or three prior years, then apply those multipliers to your trend-line projections. The output is directionally more accurate than an unseasonalized model and takes about an afternoon to build in a standard SEO forecasting spreadsheet.

Scenario Forecasting

Seo scenario planning means building three versions of the future with explicit, named assumptions — not vague ranges. Conservative: no new content, no link acquisition. Base: 8 new pages per month, 20 links. Aggressive: 20 pages per month, 60 links. Every number tied to a lever someone in the room controls.

This is the format that holds up best as a SEO forecast for stakeholders because it makes the levers visible. Backlinko's SEO team documented their own approach: "Forecasting is hard, and imperfect, but it's essential. It forces you to tie effort to outcome. And to build trust, we always present a range — best case, expected case, and failure case — not just a single optimistic projection. That honesty helps us make better decisions and earn buy-in." When actual performance lands between conservative and base, the team can explain why — and the stakeholder already agreed to those assumptions at the start.

Regression Driver Model

A regression driver model identifies which measurable inputs — number of indexed pages, average position, domain rating, internal link count, page speed scores — statistically explain past traffic, then uses those relationships to project forward based on planned changes.

This is one of the more robust SEO forecasting methods for mature sites with long data histories. It answers: "If we publish 30 new pages and improve average position from 12 to 8 on our target cluster, what's the expected traffic lift?" — with a number grounded in your own site's historical relationships, not industry benchmarks. The limitation: regression models assume past relationships hold. An algorithm update that changes how Google weights a signal can break the model overnight. Always validate against a holdout period (last 3 months) before trusting any forward projection.

Time-Series Forecasting

Time-series models — ARIMA, SARIMA, Prophet — treat organic traffic as a sequence of observations and project forward based on pattern recognition. They handle trend, seasonality, and autocorrelation more rigorously than a simple trend line.

Meta's open-source Prophet library has become a practical default for SEO teams with Python access. It handles missing data, multiple seasonality periods (weekly + annual), and allows you to add known future events as regressors. Kevin Indig recently documented building a Prophet MCP server that wires GSC data directly into Prophet for automated forecasting: "One prompt. Claude pulls your GSC data through one MCP server, runs Prophet through another, and gives you statistical rigour wrapped in context you can act on — trend detection with confidence intervals, weekly seasonality quantified per day." That's what modern SEO forecasting for budget planning infrastructure looks like in 2025–2026.

Monte Carlo Ranges and Uncertainty Bands

Every point forecast is wrong. The question is how wrong, and in which direction. Monte Carlo simulation runs your forecast model thousands of times with randomly sampled inputs — CTR assumptions, ranking improvement rates, search volume estimates — and produces a distribution of outcomes rather than a single number.

The output — "there's a 70% probability organic traffic lands between X and Y" — is more honest and more useful than a single projected number. It also makes the SEO forecast accuracy conversation productive: you can show stakeholders which input assumptions drive the most variance in outcomes. This is especially valuable right now, when CTR curve assumptions carry 40–60% uncertainty depending on AI Overview penetration in your keyword set.

Causal Forecasting and Incrementality

Causal forecasting tries to answer a harder question than "what will traffic be?" It asks: "How much of that traffic is actually caused by our SEO work, versus market growth, brand campaigns, or other factors?"

The standard method is a geo-lift or time-based holdout test: run SEO interventions on some page sets, hold others flat, and measure the difference. This is where combine SEO and google ads forecasts becomes genuinely useful — if you have geo-lift data from paid campaigns, you can use the same geographic segments to isolate organic incrementality. It's more work, but it's the only way to make an SEO growth projection that finance will treat as causal rather than correlational.

Hybrid SEO Forecasting

In practice, the most defensible SEO forecasting methods combine at least two approaches. A common hybrid: top-down seasonality-adjusted trend for the baseline, bottom-up keyword-to-page mapping for the uplift estimate, and Monte Carlo simulation to produce the uncertainty range around both.

For an SEO forecasting template or SEO forecasting spreadsheet that's actually usable by a non-technical stakeholder, the output should show: baseline trajectory, expected uplift from planned actions, confidence range, and the three or four assumptions the model is most sensitive to — in a single view. If it takes more than one page to communicate, the model is too complex for the audience it's serving.

Risks, Pitfalls, and Mitigation

Knowing the methods is half the job. Knowing where they break is the other half — and this is what separates an SEO forecasting model that survives contact with reality from one that looks great in a deck.

Cannibalization and Intent Drift

Keyword cannibalization — multiple pages competing for the same query — breaks bottom-up forecasts silently. You model page A ranking for query X, but page B is also competing, splitting impressions and depressing CTR on both. The forecast shows strong potential; the actual traffic is fragmented across pages neither of which is fully optimized.

Intent drift is a related but different problem: a query that was transactional two years ago has become informational as the market matured, and Google's SERP now shows blog posts instead of product pages. Your product page ranking model is built on historical CTR data from a SERP that no longer exists. Fix: audit your target keyword set for cannibalization before building forecasts, and check SERP composition — not just rankings — for each priority query cluster at least quarterly.

SERP Volatility and Feature Changes

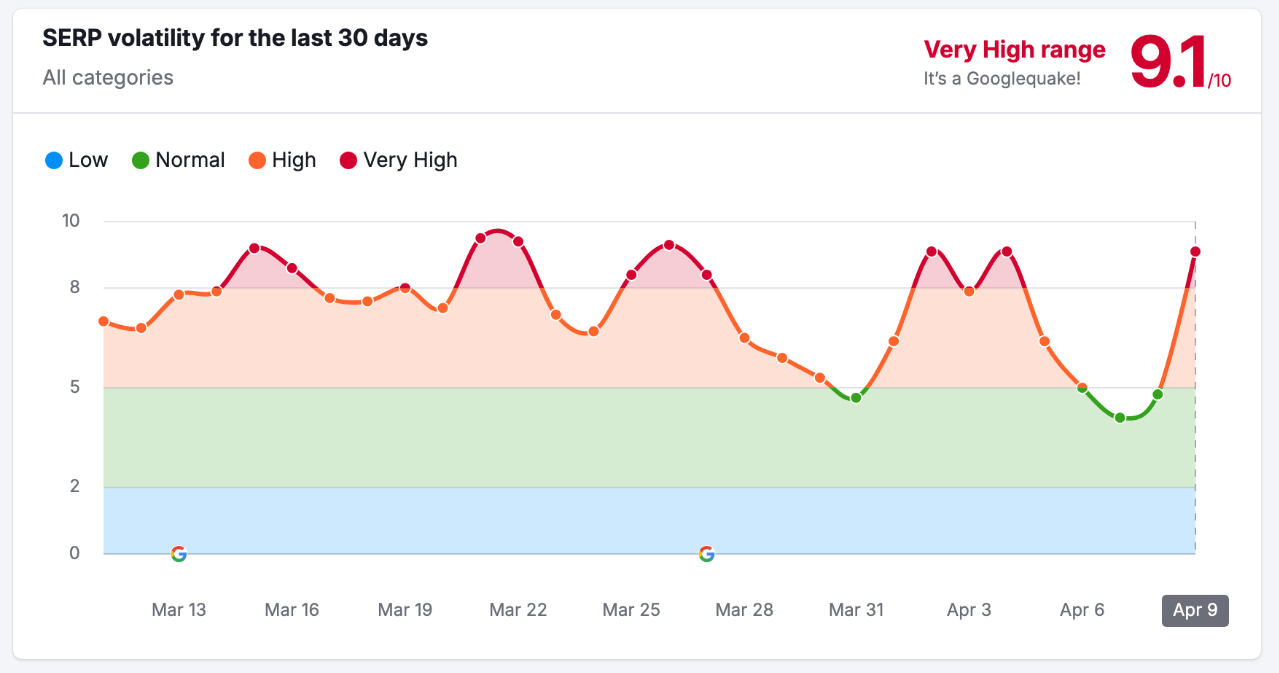

Seer Interactive's 15-month CTR tracking captured exactly what SERP volatility looks like in real numbers: paid CTR on AI Overview queries crashed from roughly 11% to 3.26% in a single month during July 2025. That's not a gradual drift — it's a discontinuity. Any forecast that didn't model this scenario lost credibility overnight.

Authoritas research found that when AI Overviews appear, the top organic result's CTR drops 47.5% on desktop and 37.7% on mobile. These findings were significant enough to be submitted as part of a formal legal complaint to the UK's Competition and Markets Authority. Build SERP feature monitoring into your forecasting workflow as a standing quarterly review. If the SERP composition for your target queries shifts, your CTR assumptions need to update immediately — not at the next annual planning cycle.

Overfitting and Model Leakage

An overfitted model looks great on historical data and falls apart on new data. The classic mistake: build a regression model on 24 months of data, optimize it until it explains 95% of historical variance, then discover it has no predictive power because it learned noise rather than signal.

Standard fix: split your data into a training set and a holdout validation set (last 3–6 months), fit the model on the training set only, and measure SEO forecast accuracy on the holdout before trusting any forward projection. If accuracy on the holdout is materially worse than on training data, the model is overfit and needs to be simplified before anyone presents it to a stakeholder.

Attribution Noise Across SEO and Paid Search

Attribution noise is arguably the most underestimated problem in SEO revenue forecasting. A user clicks a Google Ad, doesn't convert, returns three days later via organic search, and converts. Depending on your attribution model, that conversion gets assigned entirely to paid, entirely to organic, or split — and each produces a different CPA for each channel.

Wil Reynolds documented the problem from the inside: in 2024, Seer's own site saw a 41% drop in organic traffic — well beyond Gartner's prediction of a 25% search volume decline by 2026 — yet revenue and leads were unaffected. The traffic number was being used as a proxy for business performance, and it was a bad proxy. That's what attribution noise looks like at the business level: the metric moves, but the outcome doesn't, and the forecast built on the metric becomes meaningless.

For teams running Google Ads through YeezyPay agency accounts, cross-channel attribution clarity is meaningfully easier to achieve — agency-level account structures separate brand and non-brand campaigns cleanly, making paid/organic overlap visible in the data rather than hidden in a blended conversion report.

The practical mitigation: use data-driven attribution in GA4, import offline conversions from your CRM, and treat any revenue figure that can't be reconciled to a CRM pipeline record with appropriate skepticism.

Conclusion

SEO forecasting isn't a one-time deliverable — it's a discipline. The teams that build forecasts stakeholders actually trust aren't using more sophisticated models than everyone else. They're being more honest about assumptions, more rigorous about data quality, and more willing to show uncertainty ranges instead of hiding behind a single projected number.

The practical starting point: clean your data segmentation first (brand vs non-brand, intent groups, page cohorts), pick two complementary SEO forecasting methods from the list above, and build your uncertainty bands before you build your headline number. A forecast that shows a range with named assumptions will survive a bad quarter far better than a point estimate that turned out wrong. And in 2025–2026, with AI Overviews collapsing CTR on informational queries by 40–60%, any forecast that doesn't explicitly model that scenario is already wrong before the period starts.

For teams that run both organic and paid, the combination of GSC data, GA4 event tracking, CRM pipeline data, and Google Ads impression share gives you a forecasting foundation most competitors are still missing. That's where SEO forecasting for budget planning stops being a guessing game and starts being a planning tool finance actually respects.

FAQ

What is SEO forecasting and how does it work?

SEO forecasting is the process of projecting future organic search performance — traffic, rankings, conversions, or revenue — based on historical data, keyword opportunity, and planned actions. It works by combining trend analysis, CTR curve modeling, and conversion rate assumptions into a structured model. The output can be a simple SEO forecasting spreadsheet or a probabilistic range depending on the complexity of the site and the audience for the forecast.

How do you forecast organic traffic using Google Search Console?

Export impression, click, and position data from GSC by page and query. Use impression trends as a demand proxy, apply position-specific CTR estimates calibrated to your actual GSC data (not industry tables), and project traffic based on ranking improvement assumptions. For google search console forecasting to be reliable, you need at least 12 months of data — preferably stored in BigQuery via the GSC bulk export to avoid the hard 16-month data retention limit.

What is the best SEO forecasting method for stakeholders and budgets?

For a SEO forecast for stakeholders, scenario forecasting with explicit assumptions tends to land best — it shows conservative, base, and aggressive cases with the inputs clearly labeled. Finance teams respond better to a range with named assumptions than a single number with no context. For SEO forecasting for budget planning specifically, pair scenario forecasting with a regression driver model so you can show which planned investments drive which portion of the projected uplift.

How can Google Ads data improve an SEO forecast?

Google Ads provides real conversion rate data by query intent, actual demand volume via impression share, and auction-level insight into how competitive a keyword cluster is. Using paid conversion rates as organic conversion rate proxies — especially for high-intent transactional queries — produces more accurate SEO revenue forecasting than industry benchmarks. Teams using agency Google Ads accounts through YeezyPay get access to full impression share history across accounts, which makes demand modeling for organic forecasting significantly more precise.

How do you forecast SEO revenue from traffic and conversions?

Map organic traffic projections to conversion rates by intent segment from CRM data, then multiply by average order value or average deal size. For SEO forecasting for ecommerce: sessions × conversion rate × AOV. For SEO forecasting for lead generation: sessions × lead rate × close rate × ACV. As Shopify's Arthur Camberlein recommends: combine first-party conversion data with third-party keyword opportunity data for the most defensible model. Reconcile the output against CRM pipeline data at least monthly — if the model's revenue projection doesn't match what sales is seeing, the conversion rate or attribution assumptions are wrong.